How I produce the @earthin24 yearly summary

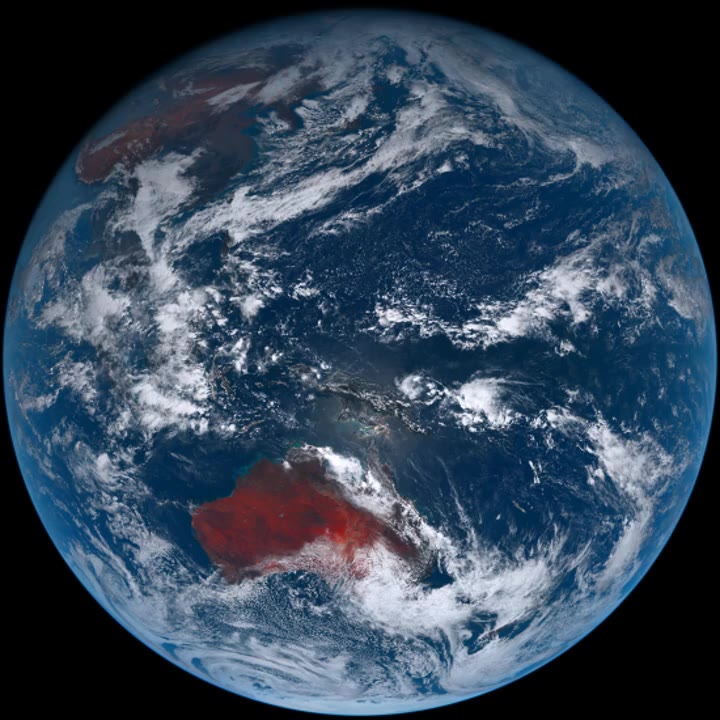

Every year I produce a timelapse video of my @earthin24 twitter bot for each of the 5 satellites that it tracks.

Earth in 2021, a year in review thread 🧵 pic.twitter.com/L485FyF7EH

— Earth (@earthin24) January 3, 2022

This year was the first year I built, tweeted, and stored each video and fulldisc frame inside github using their actions infrastructure. So my usual process of ssh’ing into my VPS and running some flaky scripts to generate the videos would have to be changed.

A quick summary of how the bot operates

Each day I have 5 scheduled actions that run the following:

- Download 24hrs worth of images.

- Combine images into an mp4.

- Compose tweet and upload a video.

- Save mp4 and one still image as an artifact of the action run.

Then every month another scheduled job runs to grab all the action artifact files, tarball them, and attach that to a monthly release. The action then deletes all artifacts, attached to any action runs, to free up space.

Getting the data

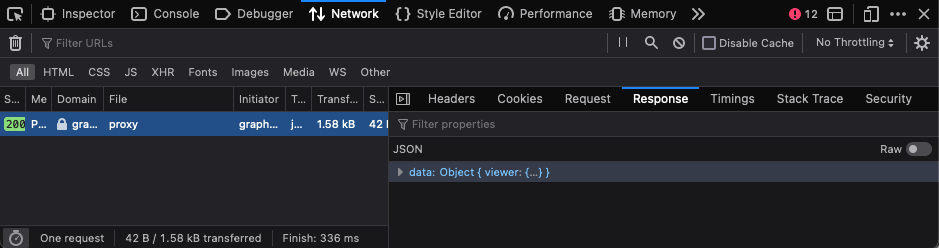

First thing was using the GitHubs graphql endpoint to get the last 12 releases, 1 for each month of 2021, and query for the release asset download urls.

{

repository(name: "earthin24", owner: "ryanseddon") {

releases(first: 12) {

edges {

node {

releaseAssets(first: 1) {

edges {

node {

downloadUrl

}

}

}

}

}

}

}

}

From here I just dumped the JSON response into the browser console so I can manipulate the response and get the urls needed.

Rather than mess with generating a PAT to curl each asset url via my cli I just wrote some JS to open each url in a new browser tab that is already authenticated and just download each zip file from there.

assetsURLs.data.repository.releases.edges.forEach((edge) => {

const [asset] = edge.node.releaseAssets.edges;

const {downloadUrl} = asset.node;

window.open(downloadUrl);

})

assetURLs is the response copied from the GitHub graphql explorer page within the browser network devtools. I then just ran the above code within the browser console.

Note your browser will block all the windows from opening as this action wasn’t initiated by a user e.g. a click/touch. You will need to allow the popups temporarily for the urls to open.

Over to the CLI

Now we have our assets we need to extract them, each artifact contains a months worth of videos/stills as zip files.

This part is a bit manual as I need to extract out each artifact into a folder denoting its month e.g. 01, 02, 03 etc. Once that is complete I can write a bash loop to extract out the fulldisc shot image.

Extract and sequentially rename

i=0; for f in **/msgiodc-*.zip; do unzip -p "$f" 00.jpg > "${i}.jpg"; ((i=$i+1)); done

This runs within the parent folder container the extracted artifacts into their respective months.

As each fulldisc shot has the same name 00.jpg we also sequentially rename it in numbered order to make it easier to produce the timelapse with ffmpeg.

Pad file names

The next step is to pad the filenames with at least three leading zeros. This makes sure ffmpeg doesn’t combine frames out of order.

for f in *.jpg; do printf "%s\n" "${f%.jpg}" |

xargs printf "%0.4i.jpg" |

xargs mv "$f"; done

The above bash script loops over all jpgs, extracts the number, pads it with printf, and then renames the file.

I find adding echo to destructive commands can help you see what the outcome of a command is without messing things up.

for f in *.jpg; do printf "%s\n" "${f%.jpg}" |

xargs printf "%0.4i.jpg" |

xargs echo mv "$f"; done

Given a folder with images names 1.jpg, 2.jpg etc. The bash script would output:

mv 1.jpg 0001.jpg

mv 2.jpg 0002.jpg

mv 3.jpg 0003.jpg

mv 4.jpg 0004.jpg

mv 5.jpg 0005.jpg

Missing fulldisc shots!

It turns out automation is great, but when you make a mistake and it still works without error it can be bad. In my case I was getting the upload artifact step to add the mp4 and the still image, it’s just that I was asking it to upload 00.jpg and not 000.jpg. My himawari8 actions were missing the fulldisc shots, eek.

Luckily the mp4 was still there and ffmpeg makes it fairly trivial to extract a single frame from a video! Saved.

i=0; for f in *.mp4; do ffmpeg -i "$f" -vf 'select=eq(n\\\,0)' -q:v 3 "${i}.jpg"; ((i=$i+1)); done

Another trusty bash for loop to go over all mp4 files and get ffmpeg to extract the first frame from each one and save it as the value of i.jpg. Once that is done I followed the same steps above to pad it out.

Not everything should be automated

I could automate this process but it’s only once a year and I like the reflection and new year enthusiasm it brings when I sit down to produce this yearly summary.